Recap

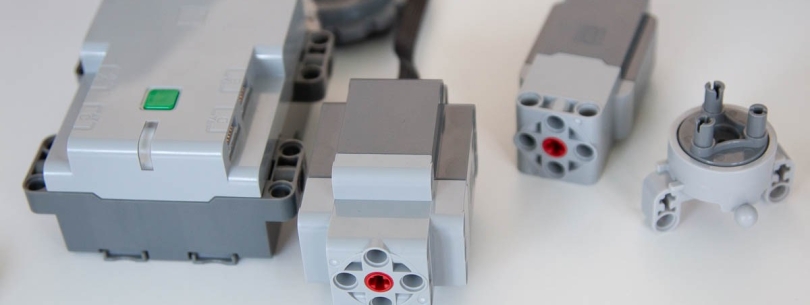

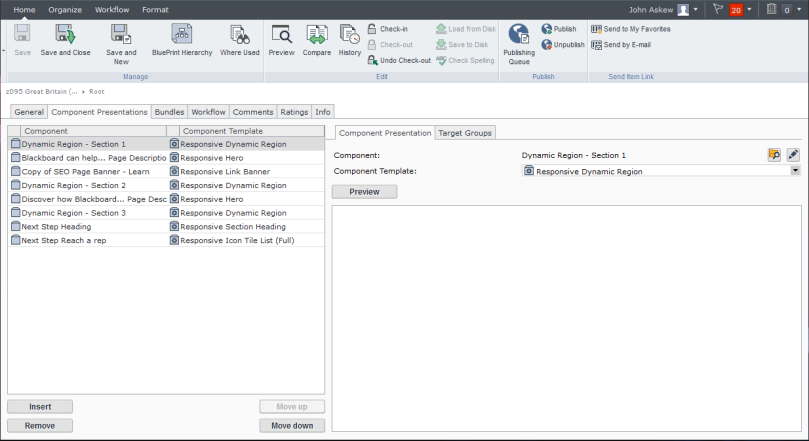

This is a follow-up to my previous post discussing how I went about creating a C# SDK for the most recent version of the LEGO specification for the Bluetooth (Low Energy) protocol used in a range of their PoweredUp products. Read more here.

Inputs (Sensors)

The biggest improvement so far has been the work to read sensory data from connected devices. There is a lot of upstream and downstream messages required to coordinate this which I detail below for those that are interested.

Input Modes

The input modes are a logical separation allowing a single connected device (e.g. motor) to have different modes of operation for which you can send (output) or receive (input) data.

The protocol allows us to interact with the connected device using either a single mode (e.g. Speed) or a combined mode where supported (e.g. Speed, Absolute Position, and Position).

Single Input

To use single input mode we need to set the desired input mode, delta to trigger updates, and flag whether to notify us (push data) or not (poll data).

This is done by sending a Port Input Format Setup (Single) message which contains:

- Port # of the connected device (e.g. 0)

- Mode we wish to set (e.g. Mode 1 / Speed)

- Delta of change which should trigger an update (e.g. 1)

- Notify flag whether to automatically send an upstream message when the delta is met.

You can be quite creative using single input. We can calibrate the min and max position of a linear actuator by switching the input modes as below:

- Switch input mode to Speed.

- Move to absolute minimum position using a lower than normal power (torque).

- Monitor changes to Speed until value drops to 0.

- Switch input mode to Position.

- Record current position as calibrated minimum for device.

- Switch input mode to Speed.

- Move to absolute maximum position using a lower than normal power (torque).

- Monitor changes to Speed until value drops to 0.

- Switch input mode to Position.

- Record current position as calibrated maximum for device.

These recorded min and max positions can be stored in the SDK against the device to act as constraints for further commands before they are forwarded onto the hub.

Combined Input(s)

Whilst single input is really simple to setup and can be used to make the connected device behave much more intelligently, it is inconvenient and inefficient to have to keep switching modes.

Combining modes allows us to inform the device what combination of modes we are interested in (based on a supported range of options) and receive a message that contains information (data sets) about all modes consolidated.

The setup of this is much more complicated based on how much information is device specific.

Prerequisites

Information about the connected device can be obtained by sending a Port Information Request message. We actually send this message twice so that we can obtain different information types:

- Port Information

- Possible Mode Combinations

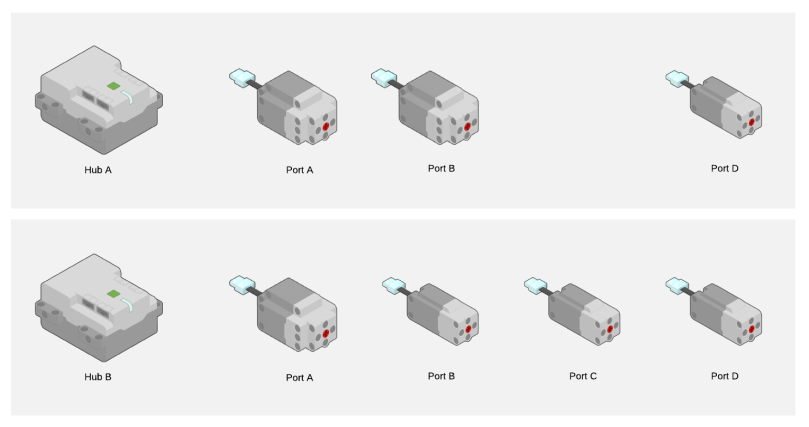

Port Information provides information from the connected device about general capabilities (e.g. input, output, combinable, synchronizable) and the modes of operation for input and output.

Based on the the port having the capability of being combinable the subsequent message provides information about the range of mode combinations supported (e.g. Mode 1 + Mode 2 + Mode 3). We will need to reference which of these mode combinations we want to utilize later on.

Once we have this information we can determine how to interact with each mode. The main thing we are interested in for the purpose of combining inputs is the value format which communicates how many data sets to expect, the structure of the data etc.

To obtain this information we send a Port Mode Information Request message for each mode. This message contains:

- Port

- Mode

- Information Type (e.g. Value Format)

The message will trigger a response which we can intercept. In the case of Value Format we get the following information:

- Number of data sets

- Data type (e.g. 8 bit)

- Total figures

- Decimals (if any)

With this information we should have everything we need to setup our combined input(s).

Setup

For combined input(s) the setup requires several messages.

Firstly we must lock the device to avoid subsequent steps from being treat as a single input setup. This is done using a Port Input Format Setup (Combined) message with the Lock LPF2 Device for setup sub-command.

Then, for each mode we wish to combine we need to send the Port Input Format Setup (Single) as detailed above.

Before we unlock the device we need to configure how the data sets will be delivered using another Port Input Format Setup (Combined) message, this time with the SetModeDataSet combination(s) sub-command.

This includes the combination mode we wish to use along with an ordered mapping of modes and data sets that we wish to consume.

An example payload could be:

- 9 = Message Length

- 0 = Hub Id

- 66 = Message Type : Port Input Format Setup (Combined)

- 0 = Port #

- 1 = Sub-Command : SetModeDataSet combination(s)

- 0 = Combination Mode Index

- 17 = Mode/DataSet[0] (1/1)

- 33 = Mode/DataSet[1] (2/1)

- 29 = Mode/DataSet[2] (3/1)

Note: This should trigger a response message to acknowledge the command but I do not receive anything currently. Issue logged here in GitHub.

Finally, the device is unlocked using a third Port Input Format Setup (Combined) message with either UnlockAndStartWithMultiUpdateEnabled or UnlockAndStartWithMultiUpdateDisabled sub-commands.

Routines

Routines are simply a way to encapsulate reusable scripts into classes that can be run against devices and optionally awaited.

A good example of a routine is range calibration. By encapsulating the routine we can just apply it to as many devices as required any use constructor parameters for configuring the routine.

A routine has start and stop conditions which allow us to create iterative routines that are designed to repeat steps a number of times before completing.

It’s also possible to make the start and/or stop conditions dependent on the state of the device. For example, you could have a routine that stopped once the Speed dropped to zero etc.

Next Steps

I am keen to complete the combined input(s) setup once I have resolved the issue in GitHub. That will allow me to simplify the range calibration routine and start to create additional routines that are more dynamic and intelligent.

I also want to introduce a mechanism to relate control interfaces with commands and routines but before that I will probably need to implement a message buffer in the SDK to ensure that we can throttle downstream messages based on the limitations of the hubs capacity to process them.